VSAN real capacity utilization

There are a few caveats that make the calculation and planning of VSAN capacity tough and gets even harder when you try to map it with real consumption on the VSAN datastore level.

- VSAN disks objects are thin provisioned by default.

- Configuring full reservation of storage space through Object Space Reservation rule in Storage Policy, does not mean

disk object block will be inflated on a datastore. This only means the space will be reserved and showed as used in VSAN Datastore Capacity pane.

Which makes it even harder to figure out why size of “files” on this datastore is not compliant with other information related to capacity.

- In order to plan capacity you need to include overhead of Storage Policies. Policies – as I haven’t met an environment which would use only one for all kinds of workloads. This means that planning should start with dividing workloads for different groups which might require different levels of protections.

- Apart from disks objects there are different objects especially SWAP which are not displayed in GUI and can be easily forgotten. However, based on the size of environment they might consume considerable amount of storage space.

- VM SWAP object does not adhere to Storage Policy assigned to VM. What does it mean? Even if you configure your VM’s disks with PFTT=0

SWAP will always utilize PFTT=1. Unless you configure advanced option (SwapThickProfivisionedDisabled) to disable it.

I have made a test to check how much space will consume my empty VM. (Empty means here without operating system even)

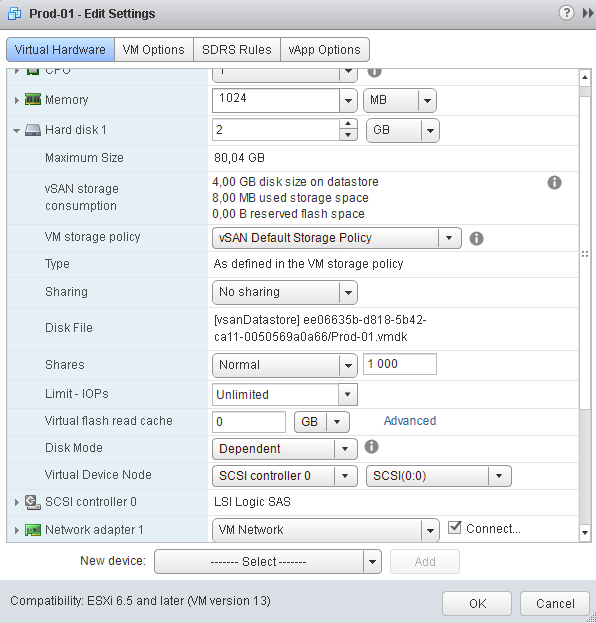

In order to see that a VM called Prod-01 has been created with 1 GB of memory and 2 GB of Hard disk and default storage policy assigned (PFTT=1)

Based on the Edit Setting window the VM disk size on datastore is 4 GB (Maximum sized based on disk size and policy). However, used storage space is 8 MB which means there will be 2 replicas 4 MB each, which is fine as there is no OS installed at all.

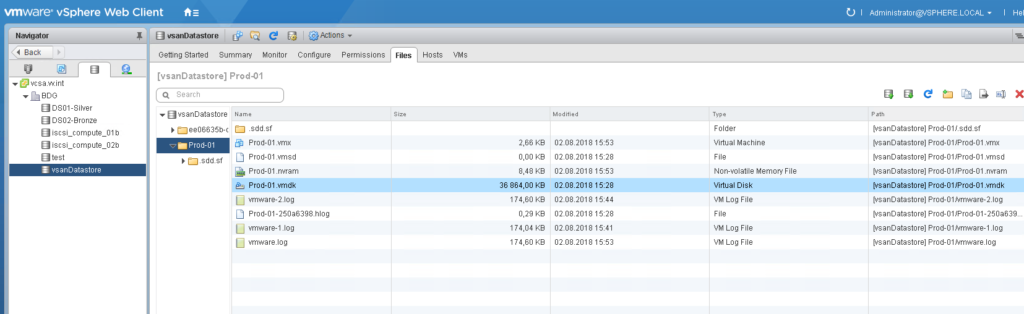

However, when you open datastore files you will see this list with Virtual Disk object you will notice that the size is 36 864 KB which gives us 36 MB. So it’s neither 4 GB nor 8 MB as displayed by edit setting consumption..

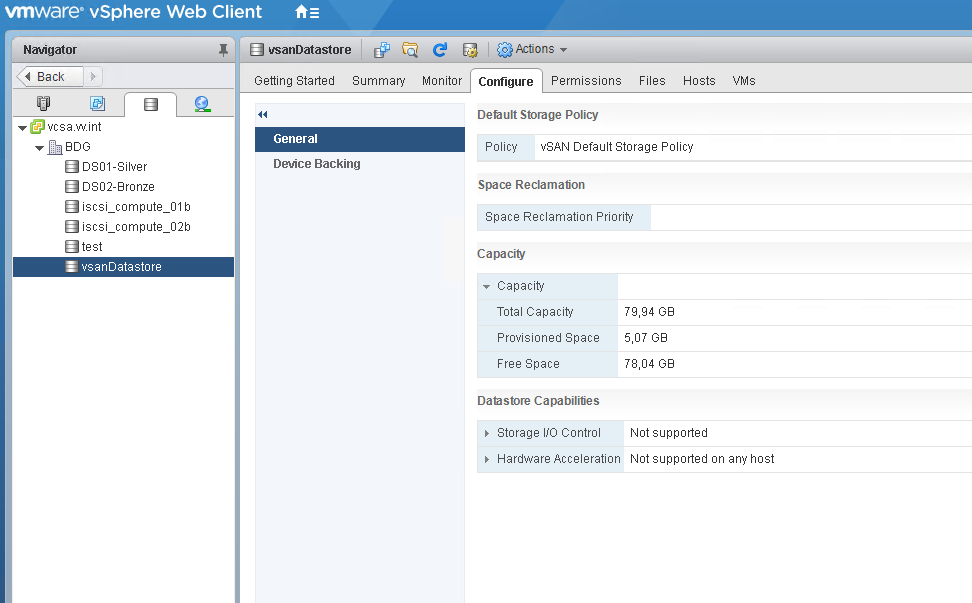

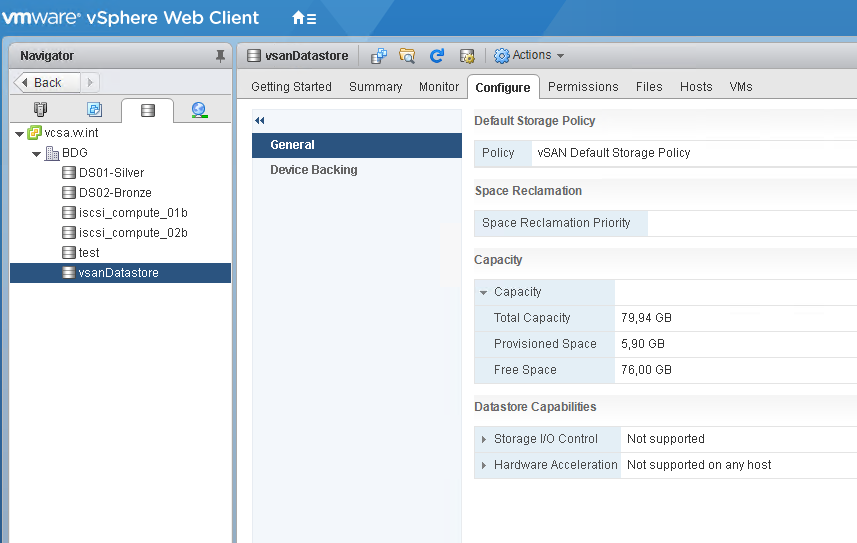

Meanwhile datastore provisioned space is listed as 5,07 GB.

So let’s power on that VM.

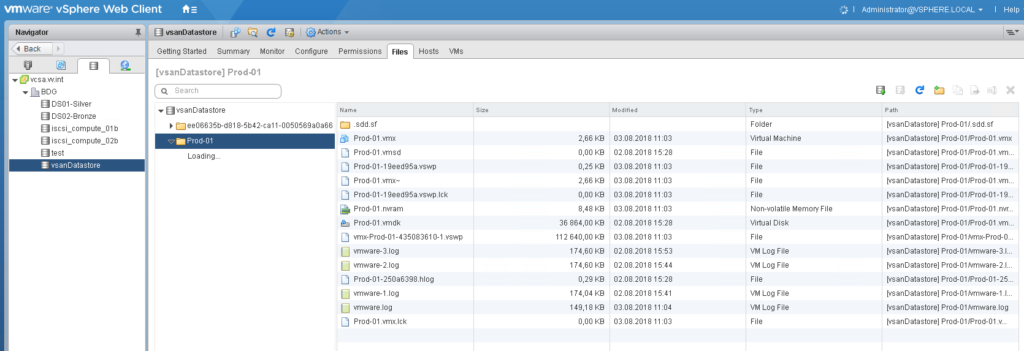

Now the disks size remain intact, but other files appear as for instance SWAP has been created as well as log and other temporary files.

Looking at datastore provisioned space now it shows 5,9 GB. Which again is confisung even if we forgot about previous findings powering on VM triggers SWAP creation which according to the theory should be protected with PFTT=1 and be thick provisioned. But if that’s the case then the provisioned storage consumption should be increased by 2 GB not 0,83 (where some space is consumed for logs and other small files included in Home namespace object)

Moreover during those observations I noticed that during the VM booting process the provisioned space is peaking up to 7,11 GB for a very short period of time

And this value after a few seconds decreases to 5.07 GB. Even after a few reboots those values stays consistent.

The question is why those information are not consistent and what heppens during booting of the VM that is the reason for peak of provisioned space?

That’s the quest for not to figure it out 🙂

One thought on “VSAN real capacity utilization”

Very good post. I am dealing with a few of these issues

as well..

Comments are closed.